When developing or training applications that rely on NVIDIA GPUs, few errors are as confusing and disruptive as the “CUDA device-side assert triggered” message. This error often appears without much context and can abruptly terminate model training, crash an application, or corrupt GPU state. Developers working with frameworks such as PyTorch, TensorFlow, or custom CUDA kernels frequently encounter it during debugging. Understanding its causes and solutions is essential for maintaining stable and efficient GPU-accelerated workflows.

TL;DR: The CUDA device-side assert triggered error occurs when a GPU kernel encounters an invalid condition, such as out-of-bounds indexing or incorrect label values. It often stems from mismatched tensor dimensions, incorrect class indices, or faulty custom CUDA code. Because CUDA operations are asynchronous, the actual error may appear far from the real cause. Enabling synchronous execution and validating inputs are the fastest ways to debug and resolve it.

What Does “CUDA Device-Side Assert Triggered” Mean?

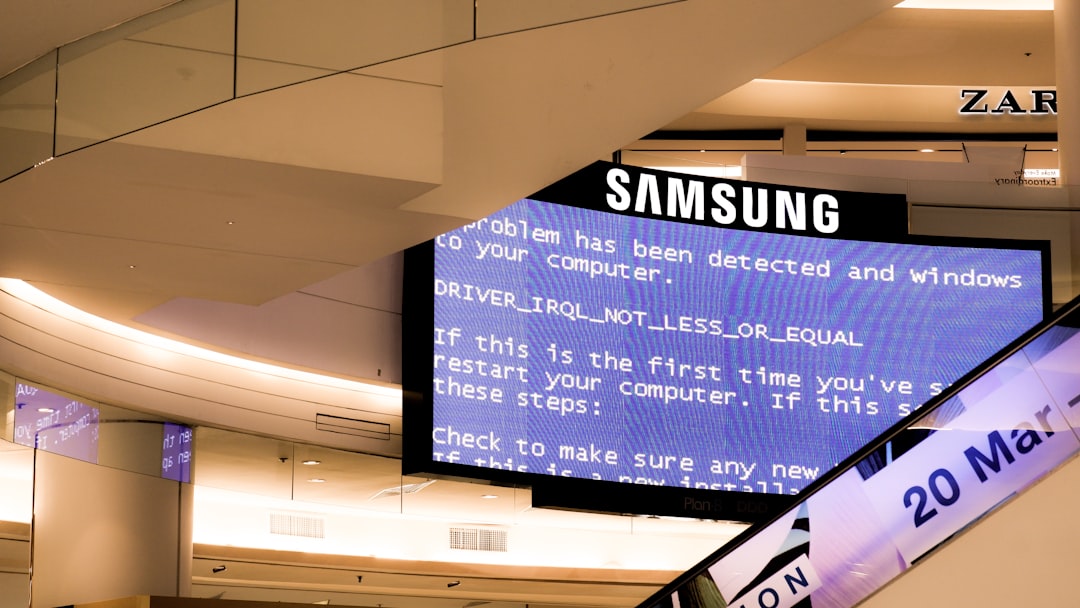

In CUDA programming, an assert is a runtime check used to verify that certain conditions are true during execution. When an assertion fails on the GPU (device side), CUDA raises an error. Unlike typical CPU errors, device-side assertion failures are harder to trace because GPU operations are typically asynchronous.

Once triggered, this error often leaves the GPU in an unstable state. This means that even subsequent, correct operations may fail until the runtime is reset. In machine learning frameworks, the error commonly appears as:

- RuntimeError: CUDA error: device-side assert triggered

- CUDA kernel errors might be asynchronously reported

This indirect reporting makes root cause analysis more complicated.

Why CUDA Errors Are Difficult to Debug

CUDA operations execute asynchronously by default. This means that the CPU queues operations to the GPU and continues executing without waiting for results. If a CUDA kernel fails, the error may not appear until a later synchronization point, such as:

- Calling

loss.backward() - Accessing a tensor’s value with

.item() - Transferring data between CPU and GPU

As a result, the stack trace often points to the wrong line of code, misleading developers during troubleshooting.

Common Causes of CUDA Device-Side Assert Triggered Errors

1. Invalid Class Labels in Classification Tasks

One of the most frequent causes occurs in deep learning classification tasks. Loss functions such as CrossEntropyLoss require target labels to fall within a specific range:

- Labels must be integers

- Values must be between

0andnum_classes - 1

If a dataset contains labels outside this range, the GPU kernel asserts, leading to failure.

Example: If a model outputs predictions for 5 classes (0–4), but a label value of 5 appears in the dataset, a device-side assert will be triggered.

2. Out-of-Bounds Tensor Indexing

Another frequent issue is indexing tensors beyond their allocated size. This includes:

- Incorrect embedding indices

- Improper slicing operations

- Mismatched tensor dimensions

Embedding layers are particularly sensitive because they directly use indices to access memory locations. If an index exceeds vocabulary size, the CUDA kernel fails immediately.

3. Mismatched Tensor Shapes

Matrix multiplications and loss calculations rely on shape compatibility. When tensor sizes do not align correctly, the GPU may encounter invalid memory access patterns, triggering assertions.

While some shape mismatches produce explicit errors, others may propagate silently until deep inside a kernel execution.

4. Data Type Inconsistencies

Using incorrect tensor types can cause assertion failures. Examples include:

- Passing floating-point labels to classification loss functions expecting integers

- Mixing CPU and GPU tensors improperly

- Using incompatible tensor precision formats

5. Custom CUDA Kernel Errors

Developers writing custom CUDA C++ kernels may insert manual assert() statements. If conditions fail, the runtime immediately halts execution. Bugs in memory handling, thread indexing, or boundary checks are common triggers.

How to Diagnose the Error

Enable Synchronous Execution

The most effective first step is forcing CUDA to run synchronously. This can be done by setting the environment variable:

CUDA_LAUNCH_BLOCKING=1This forces the CPU to wait for each GPU operation to finish before proceeding, ensuring the stack trace points to the actual failing line.

Reproduce the Error on CPU

In many deep learning frameworks, switching to CPU can provide clearer error messages. For example:

- Move model and data to CPU

- Run the same training loop

- Observe detailed output

CPU execution often reveals index-out-of-range or dimension mismatch errors more clearly.

Validate Dataset Labels

Before training begins, datasets should be validated by:

- Checking maximum and minimum label values

- Ensuring consistent data types

- Confirming class count matches model output size

Simple sanity checks can prevent hours of debugging.

Check Tensor Shapes Explicitly

Printing tensor sizes before feeding them into critical operations can expose mismatches early:

print(tensor.shape)Assertions at the Python level are easier to debug than device-side failures.

Step-by-Step Troubleshooting Workflow

- Restart the runtime to reset GPU state after the assert.

- Set

CUDA_LAUNCH_BLOCKING=1. - Run the application with a small batch size.

- Validate label ranges and embedding indices.

- Check tensor dimensions before critical operations.

- If using custom kernels, inspect boundary checks.

Preventative Best Practices

Implement Input Validation

Adding validation checks before GPU computation reduces risk significantly. These checks should include:

- Label range verification

- Shape compatibility assertions

- Data type enforcement

Use Defensive Programming in Custom Kernels

In CUDA C++ kernels, careful boundary checking is essential. Thread indexing should ensure no thread accesses memory beyond allocated ranges.

Unit Test Small Batches

Testing with minimal data isolates issues quickly. Small batches are easier to inspect and debug compared to full-scale training jobs.

Keep Frameworks Updated

Occasionally, CUDA assertion errors may stem from compatibility issues between:

- CUDA toolkit version

- NVIDIA driver version

- Deep learning framework version

Ensuring compatibility helps eliminate environment-related issues.

CUDA Debugging Tools Comparison

| Tool | Primary Use | Ease of Use | Best For |

|---|---|---|---|

| CUDA Launch Blocking | Synchronous error tracing | Very Easy | Quick debugging |

| CPU Execution | Clearer stack traces | Easy | Label and shape validation |

| NVIDIA Compute Sanitizer | Memory error detection | Advanced | Custom CUDA kernels |

| Nsight Systems | Performance profiling | Moderate | Large scale systems |

Conclusion

The CUDA device-side assert triggered error is not inherently mysterious, but it requires a methodical debugging approach. Most occurrences stem from invalid indices, incorrect labels, or tensor mismatches in deep learning pipelines. Because CUDA executes asynchronously, identifying the root cause demands forcing synchronization and validating inputs carefully.

By applying structured debugging practices, enabling synchronous execution, and validating data before computation, developers can resolve these errors quickly and prevent future occurrences. GPU programming demands precision, but with the right strategy, even cryptic device-side asserts become manageable challenges.

FAQ

What does “device-side assert triggered” mean in simple terms?

It means the GPU encountered an invalid condition during execution, such as accessing memory out of bounds or using invalid indices, and terminated the operation.

Why does the error persist after fixing the code?

Once triggered, the GPU may remain in an unstable state. Restarting the runtime or resetting the GPU is often required.

How does CUDA_LAUNCH_BLOCKING help?

It forces synchronous execution, ensuring the stack trace shows the correct line where the error occurs.

Can this error damage GPU hardware?

No. It is a software-level runtime error and does not physically harm the GPU.

Is it always caused by invalid labels?

No. While invalid classification labels are a common cause, tensor shape mismatches, embedding index errors, and custom CUDA kernel bugs can also trigger it.

How can embedding layers cause this error?

If an input index exceeds the vocabulary size defined in the embedding layer, the GPU attempts to access invalid memory, resulting in a device-side assertion failure.

Should debugging start on CPU or GPU?

Starting on CPU is often easier because error messages are more descriptive. Once confirmed working, the code can be moved back to GPU.